LLM-driven coding triggers an "entropy explosion," shifting the bottleneck from creation to delivery. DevOps must adopt policy-as-code and Platform Engineering to manage increased velocity, complexity, and technical debt.

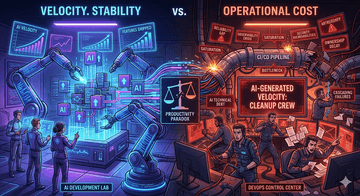

The surge in AI-assisted development has triggered a productivity paradox: while the rate of code generation has reached an all-time high, the operational overhead of maintaining that code has plummeted into a deficit. This shift is rapidly transforming DevOps from a streamlined delivery engine into a high-pressure "cleanup crew" for AI-generated velocity.

The Core Conflict: Unearned Velocity vs. Systemic Stability

AI coding assistants function as massive force multipliers, enabling engineers to ship features at a cadence that legacy CI/CD pipelines were never architected to sustain. However, this speed is often "unearned." When logic is prompted rather than engineered through deep architectural reasoning, the cognitive load is not eliminated—it is merely deferred downstream to the operations team.

The 5 Technical Burdens of the AI Era

Pipeline Saturation (The CI/CD Bottleneck)

AI tools saturate the pipeline with a high volume of granular, high-frequency changes, overtaxing build infrastructure.The Impact: Extended queue times, exhausted runner resources, and "build flakiness" as ephemeral environments struggle to synchronize with commit frequency.

The Reliability Gap (Architectural Debt)

Large Language Models (LLMs) excel at function-level logic but lack a holistic understanding of global state or distributed dependencies.The Impact: "Fragile deployments" where isolated code successes trigger cascading failures across microservices, complicating root-cause analysis.

Observability "Signal-to-Noise" Crisis

Increased code volume produces exponential increases in logs, traces, and metrics. Without standardized instrumentation, AI-generated services often lack actionable telemetry.The Impact: Severe alert fatigue; on-call engineers spend critical time untangling noisy dashboards to resolve incidents in codebases they didn't write.

The Expansion of the Security Shadow Surface

The sheer speed of AI output can bypass traditional security gates. Insecure logic, vulnerable dependencies, or hardcoded secrets can slip through if human code reviews become "rubber-stamp" exercises to keep pace with generation.The Impact: DevOps must implement more aggressive, automated DevSecOps guardrails, which paradoxically throttles the very velocity AI was intended to provide.

Ownership and Governance Decay

As AI writes a larger percentage of the codebase, the "You Build It, You Run It" paradigm erodes. When no single human fully internalizes the logic, the DevOps team becomes the de facto owner of every production outage.The Impact: Ambiguous accountability, delayed incident response, and a regressive shift toward "operational firefighting."

Conclusion: Scaling Maturity with Speed

The "DevOps Burden" is not a failure of AI, but a gap between generation speed and governance maturity. As recent engineering updates from GitHub https://github.blog/news-insights/company-news/an-update-on-github-availability/ demonstrate, even the most robust infrastructures face scaling bottlenecks and reliability challenges under the weight of rapid usage growth and AI-driven activity.

To survive this shift, organizations must pivot from faster generation to hardened delivery. Without automated testing discipline and sophisticated observability, AI does not deliver innovation—it delivers high-speed technical debt.

#GenAI #DevSecOps #CloudNative #EngineeringManagement #AlokaTech